I love a risk model, so I was pleased to read again about this project in Australia. They seem to be doing interesting work on forecasting instability and atrocities, with assistance from machine systems, and produce rankings of countries at high risk for human-rights disasters.

But I always wonder about these country-level outputs, given that there are so few cases — 200 or so countries — with relatively obvious variables. The question came to mind: “Do you really need a machine to do this?”

To test my suspicions, I made a list of the countries at risk of human-rights disasters off the top of my head, using no research and no aids other than a 5″x7″ world map, without looking at that the Australian project’s results, methodology, or criteria. I simply asked myself: what countries are at higher risk of atrocity-level human rights violations over the medium term?

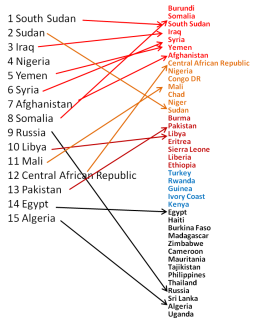

When I finally looked at their results — the left list in the paired lists here — I saw that 12 of their top 15 are in my off-the-cuff top 17 (and I think their model is just wrong about Burundi). Most fall in the top three tiers of risk as I judged them (the left column, indicated by color).

What this seems to mean is that their model is doing about the same thing as I was doing in my head — more or less multiplying together some function of (recent instability) X (poverty) X (oppression) X (diversity).

But maybe I’ve asked the wrong question. Which is less necessary, the algorithm, or the analyst who spent decades reading thousands of articles, if they are producing the same result?

This seems to be a case in which I could sit down with a machine system and/or its programmers for a week and train it in how I think. And that is the likely future for countless professional and expert tasks, across the world of work.